Mastering the Kanban Method - Unveiling the Hidden Gems of Effective Kanban Board Usage

Ever wondered how to supercharge your team's productivity? Say hello to Kanban, the dynamic method that brings clarity and efficiency to your projects.

Ever wondered how to supercharge your team's productivity? Say hello to Kanban, the dynamic method that brings clarity and efficiency to your projects.

Learn how the differential privacy works by simulating attack on data protected with that technique.

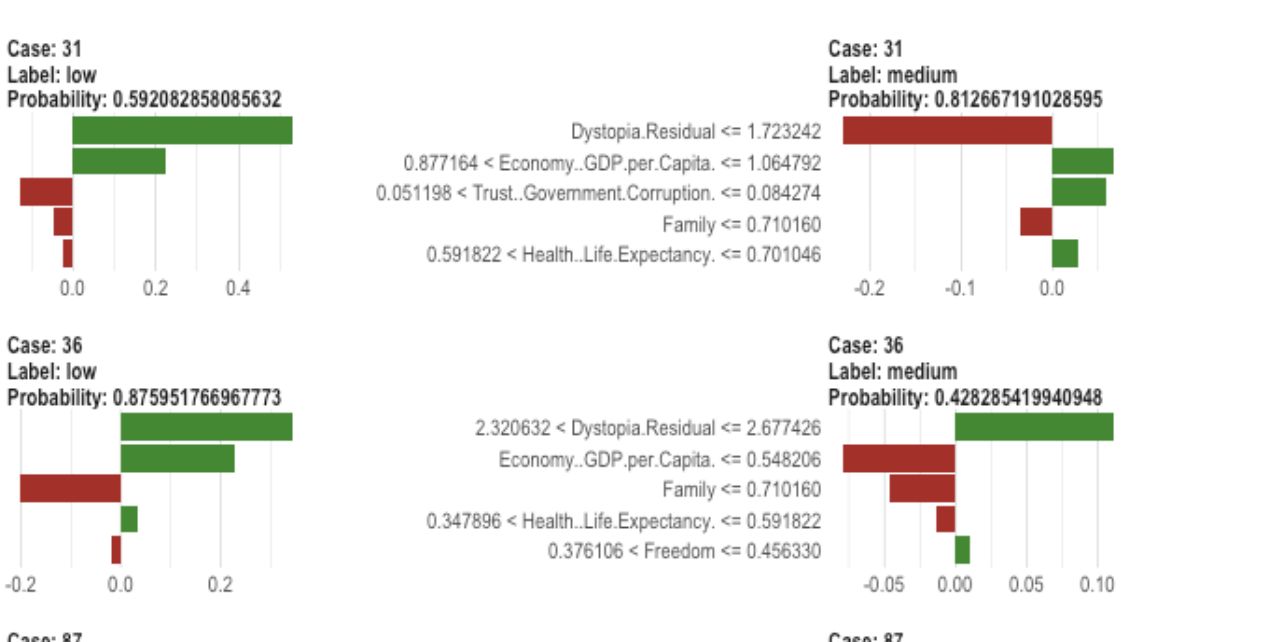

Don't let black box models hold you back. With LIME, you can interpret the predictions of even the most complex machine learning models.

Learn how to measure the quality of word and sentence embeddings in natural language processing (NLP), including intrinsic and extrinsic evaluation, and...

When it comes to explainable AI, LIME and SHAP are two popular methods for providing insights into the decisions made by machine learning models. What are...

Discover how the LIME method can help you understand the important factors behind your model's predictions in a simple, intuitive way.

Discover how the SHAP method can help you understand the important factors behind your model's predictions in a simple, intuitive way.

Making sense of AI's inner workings with KernelShap and TreeShap the powerfull tools for responsible AI.

Unveiling the mysteries of AI decisions? Let us dive into LIME, the tool that sheds light on the black box.

Imagine being able to prove something without actually revealing it. That is the power of zero-knowledge proofs, the technology that keeps your crypto safe.